【redis源码学习】看看redis的“哈希表”实现

文章目录 迭代器

抛砖引玉

先手写一个哈希表吧。

三小时过去…

就这种源码中的数据结构啊,我个人是比较推崇大家自己先看概念手写一个,能不能动咱另说,在写的过程中会领悟到很多直接看所领悟不到的细节。

redis 中 哈希表的实现

哈希表主要看哪些方面?底层承载的数据结构、节点数据结构、哈希函数、冲突解决,还有啥?扩容与…

关于增删查改就不多说了吧,哈希表的增删查改,挺常见了。

哈希函数

redis 使用的是 ,计算出来的hash值具有强分布性,不过算法有点复杂(可以把“有点”,换成“比较”,反正我看晕了)。

uint64_t siphash(const uint8_t *in, const size_t inlen, const uint8_t *k) {

#ifndef UNALIGNED_LE_CPUuint64_t hash;uint8_t *out = (uint8_t*) &hash;

#endifuint64_t v0 = 0x736f6d6570736575ULL;uint64_t v1 = 0x646f72616e646f6dULL;uint64_t v2 = 0x6c7967656e657261ULL;uint64_t v3 = 0x7465646279746573ULL;uint64_t k0 = U8TO64_LE(k);uint64_t k1 = U8TO64_LE(k + 8);uint64_t m;const uint8_t *end = in + inlen - (inlen % sizeof(uint64_t));const int left = inlen & 7;uint64_t b = ((uint64_t)inlen) << 56;v3 ^= k1;v2 ^= k0;v1 ^= k1;v0 ^= k0;for (; in != end; in += 8) {m = U8TO64_LE(in);v3 ^= m;SIPROUND;v0 ^= m;}switch (left) {case 7: b |= ((uint64_t)in[6]) << 48; /* fall-thru */case 6: b |= ((uint64_t)in[5]) << 40; /* fall-thru */case 5: b |= ((uint64_t)in[4]) << 32; /* fall-thru */case 4: b |= ((uint64_t)in[3]) << 24; /* fall-thru */case 3: b |= ((uint64_t)in[2]) << 16; /* fall-thru */case 2: b |= ((uint64_t)in[1]) << 8; /* fall-thru */case 1: b |= ((uint64_t)in[0]); break;case 0: break;}v3 ^= b;SIPROUND;v0 ^= b;v2 ^= 0xff;SIPROUND;SIPROUND;b = v0 ^ v1 ^ v2 ^ v3;

#ifndef UNALIGNED_LE_CPUU64TO8_LE(out, b);return hash;

#elsereturn b;

#endif

}

网上也有关于这个算法的解释,有兴趣的朋友可以自己找来看,准备点小零食,加杯咖啡,有条件可以再来包袋装方便面,不要拆,以备不时之需呀!

冲突解决

开链法。不用多说了吧。

表结构

再往下就没太多意思了,我就意思意思吧。本来以为这篇会很难写的,没想到难的部分我直接就看不懂了,倒是轻松了。

/* This is our hash table structure. Every dictionary has two of this as we* implement incremental rehashing, for the old to the new table. */

typedef struct dictht {dictEntry **table; //存储键值对unsigned long size; //计算table总大小unsigned long sizemask; //掩码unsigned long used; //记录开的链里面有几个节点

} dictht;

单个节点

typedef struct dictEntry {void *key;union {void *val;uint64_t u64;int64_t s64;double d;} v;struct dictEntry *next;

} dictEntry; //喏,这里体现出开链了这个 v 我解释一下,是个联合体看得出来,存的是值,为什么要用联合体?因为可以在不同场景下发挥不同的作用。这个技法学了好多次都记不住。

如:

存数据键值对的时候,用val;

存过期时间的时候,用s64;

容量变化

先说说扩容吧,拿源码直接看:

typedef struct dict {dictType *type;void *privdata;dictht ht[2];long rehashidx; /* rehashing not in progress if rehashidx == -1 */unsigned long iterators; /* number of iterators currently running */

} dict;

/* Expand or create the hash table */

int dictExpand(dict *d, unsigned long size)

{/* the size is invalid if it is smaller than the number of* elements already inside the hash table */if (dictIsRehashing(d) || d->ht[0].used > size)return DICT_ERR;dictht n; /* the new hash table */unsigned long realsize = _dictNextPower(size);/* Rehashing to the same table size is not useful. */if (realsize == d->ht[0].size) return DICT_ERR;/* Allocate the new hash table and initialize all pointers to NULL */n.size = realsize;n.sizemask = realsize-1;n.table = zcalloc(realsize*sizeof(dictEntry*));n.used = 0;/* Is this the first initialization? If so it's not really a rehashing* we just set the first hash table so that it can accept keys. */if (d->ht[0].table == NULL) {d->ht[0] = n;return DICT_OK;}/* Prepare a second hash table for incremental rehashing */d->ht[1] = n;d->rehashidx = 0;return DICT_OK;

}

光看上面的,有点未见全貌。

/* This is the initial size of every hash table */

#define DICT_HT_INITIAL_SIZE 4

/* Expand the hash table if needed */

static int _dictExpandIfNeeded(dict *d)

{/* Incremental rehashing already in progress. Return. */if (dictIsRehashing(d)) return DICT_OK;/* If the hash table is empty expand it to the initial size. */if (d->ht[0].size == 0) return dictExpand(d, DICT_HT_INITIAL_SIZE);/* If we reached the 1:1 ratio, and we are allowed to resize the hash* table (global setting) or we should avoid it but the ratio between* elements/buckets is over the "safe" threshold, we resize doubling* the number of buckets. */if (d->ht[0].used >= d->ht[0].size &&(dict_can_resize ||d->ht[0].used/d->ht[0].size > dict_force_resize_ratio)){return dictExpand(d, d->ht[0].used*2);}return DICT_OK;

}

看一下真正作为底层被调用的函数,就清楚了。

所以扩容的步骤是:

1、如果是第一次,那就直接给 4 个 。

2、如果不是第一次,那就翻倍给。

3、把新申请内存的地址存放在ht[1],并将 标识由 -1 转 0,表示需要进行 操作。

4、进行操作。

/* Performs N steps of incremental rehashing. Returns 1 if there are still* keys to move from the old to the new hash table, otherwise 0 is returned.** Note that a rehashing step consists in moving a bucket (that may have more* than one key as we use chaining) from the old to the new hash table, however* since part of the hash table may be composed of empty spaces, it is not* guaranteed that this function will rehash even a single bucket, since it* will visit at max N*10 empty buckets in total, otherwise the amount of* work it does would be unbound and the function may block for a long time. */

int dictRehash(dict *d, int n) {int empty_visits = n*10; /* Max number of empty buckets to visit. */if (!dictIsRehashing(d)) return 0;while(n-- && d->ht[0].used != 0) {dictEntry *de, *nextde;/* Note that rehashidx can't overflow as we are sure there are more* elements because ht[0].used != 0 */assert(d->ht[0].size > (unsigned long)d->rehashidx);while(d->ht[0].table[d->rehashidx] == NULL) {d->rehashidx++;if (--empty_visits == 0) return 1;}de = d->ht[0].table[d->rehashidx];/* Move all the keys in this bucket from the old to the new hash HT */while(de) {uint64_t h;nextde = de->next;/* Get the index in the new hash table */h = dictHashKey(d, de->key) & d->ht[1].sizemask;de->next = d->ht[1].table[h];d->ht[1].table[h] = de;d->ht[0].used--;d->ht[1].used++;de = nextde;}d->ht[0].table[d->rehashidx] = NULL;d->rehashidx++;}/* Check if we already rehashed the whole table... */if (d->ht[0].used == 0) {zfree(d->ht[0].table);d->ht[0] = d->ht[1];_dictReset(&d->ht[1]);d->rehashidx = -1;return 0;}/* More to rehash... */return 1;

}

服务繁忙时的渐进式!!!

/* This function performs just a step of rehashing, and only if there are* no safe iterators bound to our hash table. When we have iterators in the* middle of a rehashing we can't mess with the two hash tables otherwise* some element can be missed or duplicated.** This function is called by common lookup or update operations in the* dictionary so that the hash table automatically migrates from H1 to H2* while it is actively used. */

static void _dictRehashStep(dict *d) {if (d->iterators == 0) dictRehash(d,1);

}

当redis中数据量大的时候,整个过程将非常缓慢。

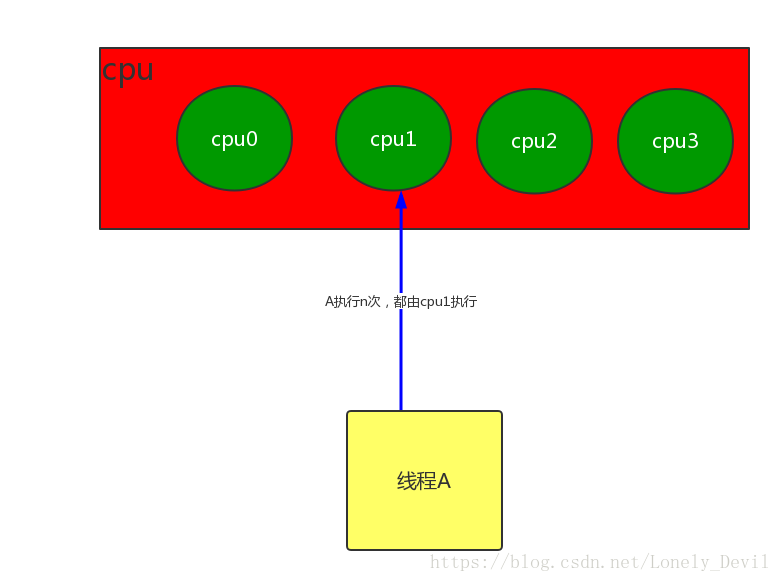

redis采用了“分而治之”的思想,执行增删查改前,先判断当前字典是否在执行。如果是,则一个节点。

服务空闲时的批量!!!

/* Rehash for an amount of time between ms milliseconds and ms+1 milliseconds */

int dictRehashMilliseconds(dict *d, int ms) {long long start = timeInMilliseconds();int rehashes = 0;while(dictRehash(d,100)) {rehashes += 100;if (timeInMilliseconds()-start > ms) break;}return rehashes;

}

每次对100个节点进行操作。

迭代器

redis 的迭代器也是很有特色的。它不仅提供了我们平时使用的迭代器,还提供了另一种边迭代边删除数据的迭代器。

其实迭代数据和删除数据操作分开来看都不难,但是鉴于redis是单线程操作,所以需要尽可能的将多个操作合成少量操作,以此提高效率,于是就出现了这种“新型迭代器”。

其实把俩操作合在一起,也很简单嘛。但是放在这么一个场景了,那就不简单了。毕竟redis是存在渐进式的。你说我遍历一个,你一次,这成何体统?这数据不就乱套了嘛。

所以,这个安全迭代器在迭代的时候,会促使被迫暂停营业。

间接迭代,防止大批量数据查询卷死自己

那,如果有人大批量的获取数据呢?这迭代器不得累死吗?迭代器累死就算了,别把整个redis给累死啊,那问题就大了。

所以,redis采用了间接迭代的方式。

这里稍微提一下,可以参考MySQL的批量插入。

使用游标啦。。

吃饭吃饭,不然它还没卷死我就先饿死了。。