python表情包爬虫程序_Python网络爬虫爬取站长素材上的表情包

由于经常看群消息,收藏的表情包比较少,每次在群里斗图我都处于下风,最近在中国大学MOOC上学习了嵩天老师的网络爬虫与信息提取课程,于是决定写一个爬取网上表情包的网络爬虫。通过搜索发现站长素材上的表情包很是丰富,一共有446页,每页10个表情包,一共是4000多个表情包,近万个表情,我肯以后谁还敢给我斗图

网页分析

站长素材第一页表情包是这样的:

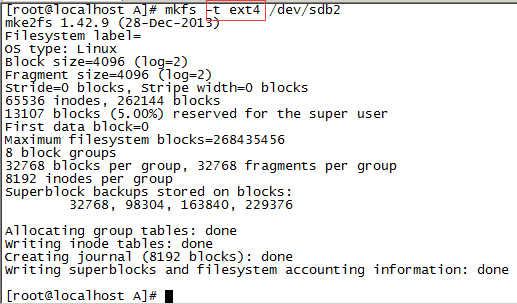

接下来是分析每一页表情包列表的源代码:

再来分析每个表清包全部表情对应的网页:

步骤

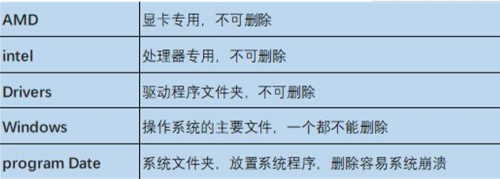

1、获得每页展示的每个表情包连接和title

2、获得每个表情包的所有表情的链接

3、使用获取到的表情链接获取表情,每个表情包的表情放到一个单独的文件夹中,文件夹的名字是title属性值

代码

#-*-:utf-8-*-

'''

on 2017年3月18日

@: lavi

'''

bs4

from bs4

re

os

'''

获得页面内容

'''

def (url):

try:

r = .get(url,=30)

r.()

r. = r.

r.text

:

""

'''

获得

'''

def (url):

head = {"user-agent":"/5.0"}

try:

r = .get(url,=head,=30)

print(":"+r.)

r.()

r.

:

None

'''

获得页面中的表情的链接

'''

def (html,):

soup = (html,'html.')

divs = soup.("div", attrs={"class":"up"})

for div in divs:

a = div.find("div", attrs={"class":"num_1"}).find("a")

title = a.attrs["title"]

= a.attrs["href"]

.((title,))

def (,):

for tuple in :

title = tuple[0]

url = tuple[1]

=[]

html = (url)

soup = (html,"html.")

#print(soup.())

div = soup.find("div", attrs={"class":""})

#print(type(div))

= div..

#print(type())

imgs = .("img");

for img in imgs:

src = img.attrs["src"]

.(src)

[title] =

def (,):

head = {"user-agent":"/5.0"}

= 0

for title, in .items():

#print(title+":"+str())

try:

dir = +title

if not os.path.(dir):

os.mkdir(dir)

= 0

for in :

path = dir+"/"+.split("/")[-1]

#print(path)

#print()

if not os.path.(path):

r = .get(,=head,=30)

r.()

with open(path,"wb") as f:

f.write(r.)

f.close()

= +1

print("当前进度文件夹进度{:.2f}%".(*100/len()))

= + 1

print("文件夹进度{:.2f}%".(*100/len()))

:

print(.())

#print("from 爬取失败")

def main():

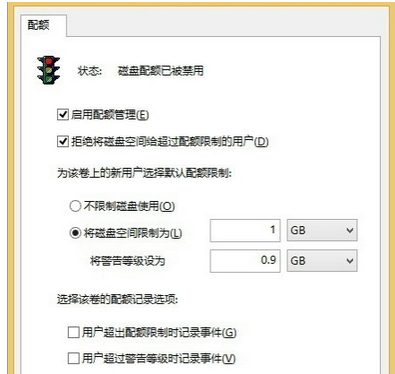

#害怕磁盘爆满就不获取全部的表情了,只获取30页,大约300个表情包里的表情

pages = 30