零基础入门数据挖掘比赛(天池二手车交易价格预测)

零基础入门数据挖掘比赛(天池二手车交易价格预测)

以下以与天池举办的二手车交易价格预测这个数据挖掘赛题做的数据挖掘入门Task4笔记

Task4:建模与调参 线性回归模型 线性回归对于特征的要求 ;处理长尾分布;

# 先做完小技巧

sample_feature = sample_feature.dropna().replace('-', 0).reset_index(drop=True)

sample_feature['notRepairedDamage'] = sample_feature['notRepairedDamage'].astype(np.float32)

train = sample_feature[continuous_feature_names + ['price']]train_X = train[continuous_feature_names]

train_y = train['price']# 简单建模

from sklearn.linear_model import LinearRegression

model = LinearRegression(normalize=True)

model = model.fit(train_X, train_y)# 查看训练的线性回归模型的截距(intercept)与权重(coef)

'intercept:'+ str(model.intercept_)

sorted(dict(zip(continuous_feature_names, model.coef_)).items(), key=lambda x:x[1], reverse=True)from matplotlib import pyplot as plt

subsample_index = np.random.randint(low=0, high=len(train_y), size=50)

# 绘制特征v_9的值与标签的散点图,图片发现模型的预测结果(蓝色点)与真实标签(黑色点)的分布差异较 大,且部分预测值出现了小于0的情况,说明我们的模型存在一些问题plt.scatter(train_X['v_9'][subsample_index], train_y[subsample_index], color='black')

plt.scatter(train_X['v_9'][subsample_index], model.predict(train_X.loc[subsample_index]), color='blue')

plt.xlabel('v_9')

plt.ylabel('price')

plt.legend(['True Price','Predicted Price'],loc='upper right')

print('The predicted price is obvious different from true price')

plt.show()

# 通过作图我们发现数据的标签(price)呈现长尾分布,不利于我们的建模预测。原因是很多模型都假设数据误差 项符合正态分布,而长尾分布的数据违背了这一假

import seaborn as sns

print('It is clear to see the price shows a typical exponential distribution')

plt.figure(figsize=(15,5))

plt.subplot(1,2,1)

sns.distplot(train_y)

plt.subplot(1,2,2)

sns.distplot(train_y[train_y < np.quantile(train_y, 0.9)]

# 在这里我们对标签进行了log(x+1)变换,使标签贴近于正态分布

train_y_ln = np.log(train_y + 1)import seaborn as sns

print('The transformed price seems like normal distribution')

plt.figure(figsize=(15,5))

plt.subplot(1,2,1)

sns.distplot(train_y_ln)

plt.subplot(1,2,2)

sns.distplot(train_y_ln[train_y_ln < np.quantile(train_y_ln, 0.9)])model = model.fit(train_X, train_y_ln)

print('intercept:'+ str(model.intercept_)) sorted(dict(zip(continuous_feature_names, model.coef_)).items(), key=lambda x:x[1], reverse=True)# 再次进行可视化,发现预测结果与真实值较为接近,且未出现异常状况

plt.scatter(train_X['v_9'][subsample_index], train_y[subsample_index], color='black')

plt.scatter(train_X['v_9'][subsample_index], np.exp(model.predict(train_X.loc[subsample_index])), color='blue')

plt.xlabel('v_9')

plt.ylabel('price')

plt.legend(['True Price','Predicted Price'],loc='upper right')

print('The predicted price seems normal after np.log transforming')

plt.show()

嵌入式特征选择

1.Lasso回归 ;

2. Ridge回归(岭回归);

3. 决策树;

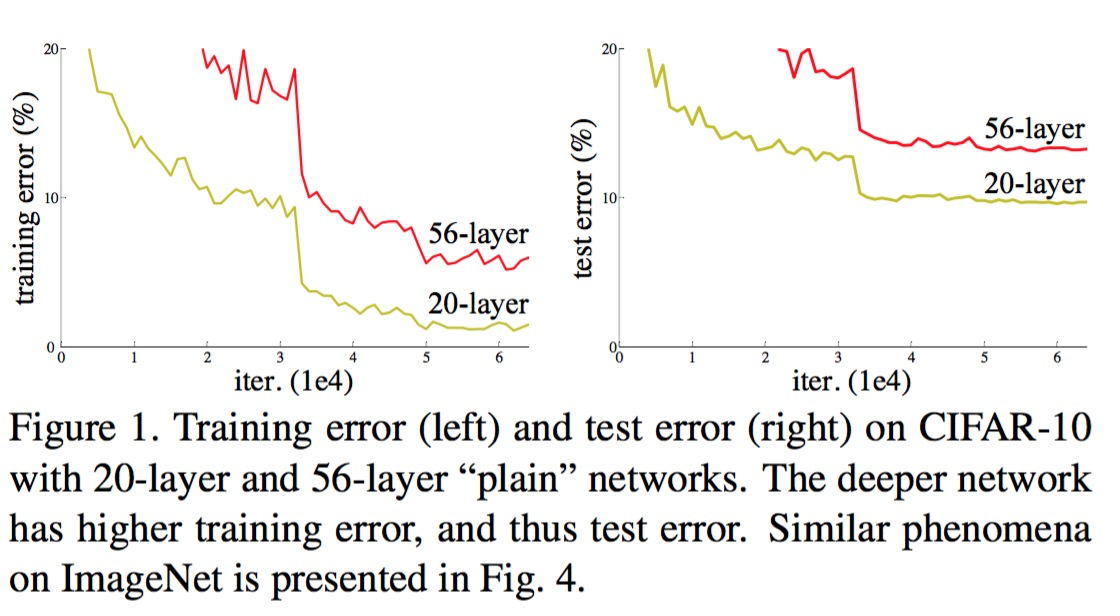

模型对比

1.常用线性模型 ;

2. 常用非线性模型;

模型性能验证

1.评价函数与目标函数;

2. 交叉验证方法;

3. 留一验证方法;

4. 针对时间序列问题的验证;

5. 绘制学习率曲线;

6. 绘制验证曲线;

k折交叉验证

在使用训练集对参数进行训练的时候,经常会发现人们通常会将一整个训练集分为三个部分(比 如mnist手写训练集)。一般分为:训练集(),评估集(),测试集 ()这三个部分。这其实是为了保证训练效果而特意设置的。其中测试集很好理解,其 实就是完全不参与训练的数据,仅仅用来观测测试效果的数据。因为在实际的训练中,训练的结果对于训练集的拟合程度通常还是挺好的(初始条件敏感),但 是对于训练集之外的数据的拟合程度通常就不那么令人满意了。因此我们通常并不会把所有的数 据集都拿来训练,而是分出一部分来(这一部分不参加训练)对训练集生成的参数进行测试,相 对客观的判断这些参数对训练集之外的数据的符合程度。这种思想就称为交叉验证(Cross )。

from sklearn.model_selection import cross_val_score

from sklearn.metrics import mean_absolute_error, make_scorerdef log_transfer(func):def wrapper(y, yhat):result = func(np.log(y), np.nan_to_num(np.log(yhat)))return resultreturn wrapper

scores = cross_val_score(model, X=train_X, y=train_y, verbose=1, cv = 5, scoring=make_scorer(log_transfer(mean_absolute_error)))# 使用线性回归模型,对未处理标签的特征数据进行五折交叉验证(Error 1.36)

print('AVG:', np.mean(scores))

# 使用线性回归模型,对处理过标签的特征数据进行五折交叉验证(Error 0.19)

scores = cross_val_score(model, X=train_X, y=train_y_ln, verbose=1, cv = 5, scoring=make_scorer(mean_absolute_error))

print('AVG:', np.mean(scores))

"""AVG: 0.19382863663604424"""

scores = pd.DataFrame(scores.reshape(1,-1))

scores.columns = ['cv' + str(x) for x in range(1, 6)]

scores.index = ['MAE']

scores

绘制学习率曲线与验证曲线

from sklearn.model_selection import learning_curve, validation_curvedef plot_learning_curve(estimator, title, X, y, ylim=None, cv=None,n_jobs=1, train_size=np.linspace(.1, 1.0, 5 )): plt.figure() plt.title(title) if ylim is not None: plt.ylim(*ylim) plt.xlabel('Training example') plt.ylabel('score') train_sizes, train_scores, test_scores = learning_curve(estimator, X, y, cv=cv, n_jobs=n_jobs, train_sizes=train_size, scoring = make_scorer(mean_absolute_error)) train_scores_mean = np.mean(train_scores, axis=1) train_scores_std = np.std(train_scores, axis=1) test_scores_mean = np.mean(test_scores, axis=1) test_scores_std = np.std(test_scores, axis=1) plt.grid()#区域 plt.fill_between(train_sizes, train_scores_mean - train_scores_std, train_scores_mean + train_scores_std, alpha=0.1, color="r") plt.fill_between(train_sizes, test_scores_mean - test_scores_std, test_scores_mean + test_scores_std, alpha=0.1, color="g") plt.plot(train_sizes, train_scores_mean, 'o-', color='r', label="Training score") plt.plot(train_sizes, test_scores_mean,'o-',color="g", label="Cross-validation score") plt.legend(loc="best") return plt plot_learning_curve(LinearRegression(), 'Liner_model', train_X[:1000], train_y_ln[:1000], ylim=(0.0, 0.5), cv=5, n_jobs=1)

模型调参

1.贪心调参方法 ;

2. 网格调参方法;

3. 贝叶斯调参方法;

# LGB的参数集合:objective = ['regression', 'regression_l1', 'mape', 'huber', 'fair']num_leaves = [3,5,10,15,20,40, 55]

max_depth = [3,5,10,15,20,40, 55]

bagging_fraction = []

feature_fraction = []

drop_rate = []

贪心调参

best_obj = dict()

for obj in objective:model = LGBMRegressor(objective=obj)score = np.mean(cross_val_score(model, X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error)))best_obj[obj] = scorebest_leaves = dict()

for leaves in num_leaves:model = LGBMRegressor(objective=min(best_obj.items(), key=lambda x:x[1])[0], num_leaves=leaves)score = np.mean(cross_val_score(model, X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error)))best_leaves[leaves] = scorebest_depth = dict()

for depth in max_depth:model = LGBMRegressor(objective=min(best_obj.items(), key=lambda x:x[1])[0],num_leaves=min(best_leaves.items(), key=lambda x:x[1])[0],max_depth=depth)score = np.mean(cross_val_score(model, X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error)))best_depth[depth] = scoresns.lineplot(x=['0_initial','1_turning_obj','2_turning_leaves','3_turning_depth'], y=[0.143 ,min(best_obj.values()), min(best_leaves.values()), min(best_depth.values())])

Grid 调参

from sklearn.model_selection import GridSearchCVparameters = {'objective': objective , 'num_leaves': num_leaves, 'max_depth': max_depth}

model = LGBMRegressor()

clf = GridSearchCV(model, parameters, cv=5)

clf = clf.fit(train_X, train_y)clf.best_params_

"""{'max_depth': 15, 'num_leaves': 55, 'objective': 'regression'}"""

model = LGBMRegressor(objective='regression',num_leaves=55,max_depth=15)np.mean(cross_val_score(model, X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error)))

贝叶斯调参

from bayes_opt import BayesianOptimizationdef rf_cv(num_leaves, max_depth, subsample, min_child_samples):val = cross_val_score(LGBMRegressor(objective = 'regression_l1',num_leaves=int(num_leaves),max_depth=int(max_depth),subsample = subsample,min_child_samples = int(min_child_samples)),X=train_X, y=train_y_ln, verbose=0, cv = 5, scoring=make_scorer(mean_absolute_error)).mean()return 1 - val

rf_bo = BayesianOptimization(rf_cv,{'num_leaves': (2, 100),'max_depth': (2, 100),'subsample': (0.1, 1),'min_child_samples' : (2, 100)}

)rf_bo.maximize()1 - rf_bo.max['target']

小技巧

通过 函数通过调整数据类型,帮助我们减少数据在内存中占用的空间。

def reduce_mem_usage(df):

""" iterate through all the columns of a dataframe and modify the data type to reduce memory usage.

""" start_mem = df.memory_usage().sum() print('Memory usage of dataframe is {:.2f} MB'.format(start_mem)) for col in df.columns: col_type = df[col].dtype if col_type != object: c_min = df[col].min() c_max = df[col].max() if str(col_type)[:3] == 'int': if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max: df[col] = df[col].astype(np.int8) elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max: df[col] = df[col].astype(np.int16) elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max: df[col] = df[col].astype(np.int32) elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max: df[col] = df[col].astype(np.int64) else: if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max: df[col] = df[col].astype(np.float16) elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max: df[col] = df[col].astype(np.float32) else: df[col] = df[col].astype(np.float64) else: df[col] = df[col].astype('category')end_mem = df.memory_usage().sum() print('Memory usage after optimization is: {:.2f} MB'.format(end_mem)) print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem)) return dfsample_feature = reduce_mem_usage(pd.read_csv('data_for_tree.csv'))

continuous_feature_names = [x for x in sample_feature.columns if x not in ['price','brand','model','brand']]

总结

上述,我们完成了建模与调参的工作,并对我们的模型进行了验证。此外,我们还采用了一些基本方法来提高预测的精度,提升如下图所示:

plt.figure(figsize=(13,5))

sns.lineplot(x=['0_origin','1_log_transfer','2_L1_&_L2','3_change_model','4_parameter_turning'], y=[1.36 ,0.19, 0.19, 0.14, 0.13])